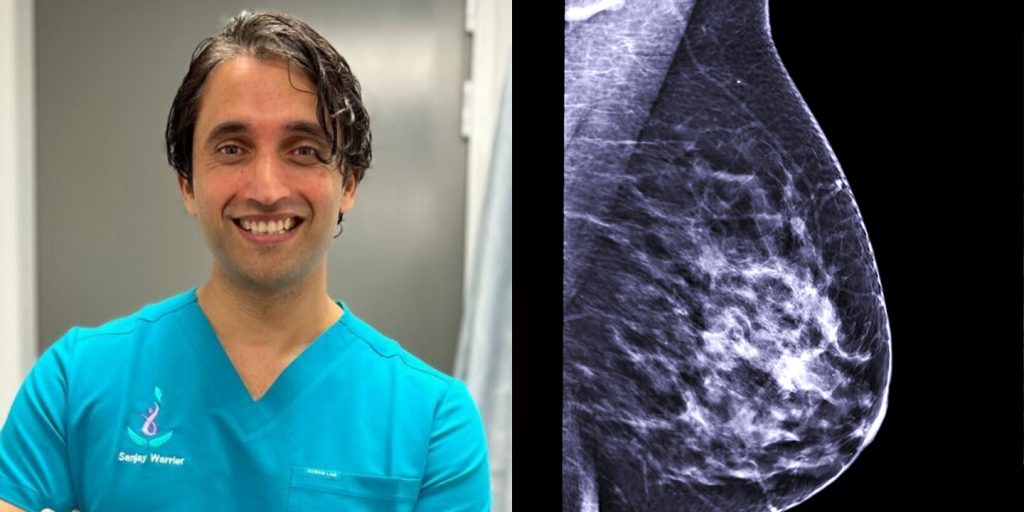

An Australian cancer surgeon is warning people not to use AI tools like ChatGPT to diagnose health symptoms, after a test showed the chatbot suggested delaying medical attention for a potential sign of breast cancer. Sanjay Warrier, an Associate Professor at the University of Sydney with the Royal Prince Alfred Academic Institute, said the advice could be dangerous because some aggressive cancers can progress quickly.

“Some of my more savvy patients were using AI,” Warrier said. So, I put a question into ChatGPT about redness on the breast, and it suggested that I wait a few weeks to see if it improved. “Some cancers are so aggressive that a few weeks can mean the difference between life and death.”

Warrier said growing reliance on tools like ChatGPT signals a worrying trend, because it can create a misplaced sense of safety and, in turn, delay proper care. “ChatGPT is not a health service,” the Council member for Breast Surgeons of Australia New Zealand said. “It cannot examine you, it cannot feel a lump, and it cannot assess your individual risk.”

A June 2024 study by the University of Sydney found 9.9 per cent of respondents had used ChatGPT to obtain health-related information in the previous six months. More concerningly, 61 per cent had asked at least one higher-risk question—seeking guidance about taking action that would typically require clinical advice. Warrier understands why people might do it: “It’s convenient and cost-efficient, and it’s never nice to have an examination or talk about some symptoms, so I can understand why people are turning to AI,” he said. “But it comes down to the quality of the advice.”

He said the greatest danger is false reassurance—particularly for people who are already uncertain, anxious, or putting off an appointment. “If an online tool suggests something is likely benign, some people will delay seeking proper care,” he said. Early detection remains critical: “Our biggest driver for outcome is picking up symptoms early,” Warrier said. “Time matters,” he said. “You should not be typing [symptoms] into a chatbot hoping for reassurance. “You should be booking an appointment.”

Warrier also emphasised that symptoms are rarely straightforward. Like searching on “Dr Google”, entering a single symptom can lead to a long list of possible causes, ranging from minor issues to serious illness. “You lose perceptibility using AI,” Warrier said. He added that while AI can provide general information, it can’t interpret symptoms in context, notice subtle physical changes, or weigh up personal and family medical history during an examination.

“We need to see that AI is not gospel,” he said. “The perfect answer is not there yet, particularly in health.”

His message isn’t to reject technology, but to recognise its limits. AI may help people learn about symptoms and improve health awareness, but it can’t replace clinical judgement or a proper medical assessment. “Technology can support healthcare, but it cannot substitute it,” he said.